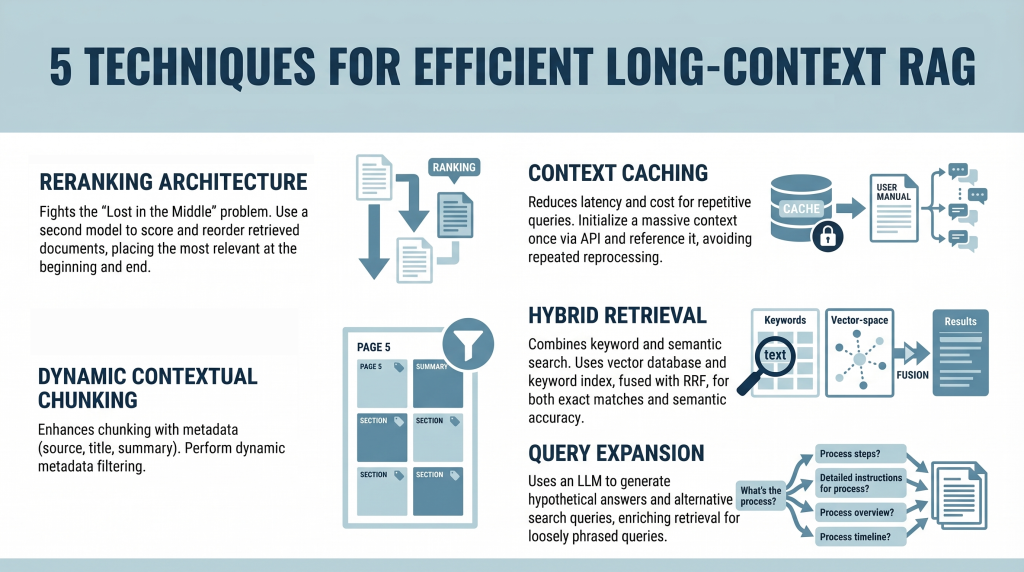

5 Techniques for Efficient Long-Context RAG

In this article, you will learn how to build efficient long-context retrieval-augmented generation (RAG) systems using modern techniques that address attention limitations and cost challenges.

Topics we will cover include:

- How reranking mitigates the “Lost in the Middle” problem.

- How context caching reduces latency and computational cost.

- How hybrid retrieval, metadata filtering, and query expansion improve relevance.

Introduction

Retrieval-augmented generation (RAG) is undergoing a major shift. For years, the RAG mantra was simple: “Break your documents into smaller pieces, embed them, and retrieve the most relevant pieces.” This was necessary because large language models (LLMs) had context windows that were expensive and limited, typically ranging from 4,000 to 32,000 tokens.

Now, models like Gemini Pro and Claude Opus have broken these limits, offering context windows of 1 million tokens or more. In theory, you could now paste an entire collection of novels into a prompt. In practice, however, this capability introduces two major challenges:

- The “Lost in the Middle” Problem: Research has shown that models often ignore information placed in the middle of a massive prompt, favoring the beginning and the end.

- The Cost Problem: Processing a million tokens for every query is computationally expensive and slow. It’s like rereading an entire encyclopedia every time someone asks a simple question.

This tutorial explores five practical techniques for building efficient long-context RAG systems. We move beyond simple partitioning and examine strategies for mitigating attention loss and enabling context reuse from a developer’s perspective.

1. Implementing a Reranking Architecture to Fight “Lost in the Middle”

The “Lost in the Middle” problem, identified in a 2023 study by Stanford and UC Berkeley, reveals a critical limitation in LLM attention mechanisms. When presented with long context, model performance peaks when relevant information appears at the beginning or end. Information buried in the middle is significantly more likely to be ignored or misinterpreted.

Instead of inserting retrieved documents directly into the prompt in their original order, introduce a reranking step.

Here is the developer workflow:

- Retrieval: Use a standard vector database (such as Pinecone or Weaviate) to retrieve a larger candidate set (e.g. top 20 instead of top 5)

- Reranking: Pass these candidates through a specialized cross-encoder reranker (such as the Cohere Rerank API or a Sentence-Transformers cross-encoder model) that scores each document against the query

- Reordering: Select the top 5 most relevant documents

- Context Placement: Place the most relevant document at the beginning and the second-most relevant at the end of the prompt. Position the remaining three in the middle

This strategic placement ensures that the most important information receives maximum attention.

2. Leveraging Context Caching for Repetitive Queries

Long contexts introduce latency and cost overhead. Processing hundreds of thousands of tokens repeatedly is inefficient. Context caching addresses this issue.

Think of this as initializing a persistent context for your model.

- Create the Cache: Upload a large document (e.g. a 500,000-token manual) once via an API and define a time-to-live (TTL)

- Reference the Cache: For subsequent queries, send only the user’s question along with a reference ID to the cached context

- Cost Savings: You reduce input token costs and latency, since the document does not need to be reprocessed each time

This approach is especially useful for chatbots built on static knowledge bases.

3. Using Dynamic Contextual Chunking with Metadata Filters

Even with large context windows, relevance remains critical. Simply increasing context size does not eliminate noise.

This approach enhances traditional chunking with structured metadata.

- Intelligent Chunking: Split documents into segments (e.g. 500–1000 tokens) and attach metadata such as source, section title, page number, and summaries

- Hybrid Filtering: Use a two-step retrieval process:

- Metadata Filtering: Narrow the search space based on structured attributes (e.g. date ranges or document sections)

- Semantic Search: Perform similarity search only on filtered candidates

This reduces irrelevant context and improves precision.

4. Combining Keyword and Semantic Search with Hybrid Retrieval

Vector search captures meaning but can miss exact keyword matches, which are essential for technical queries.

Hybrid search combines semantic and keyword-based retrieval.

- Dual Retrieval:

- Vector database for semantic similarity

- Keyword index (e.g. Elasticsearch) for exact matches

- Fusion: Use Reciprocal Rank Fusion (RRF) to combine rankings, prioritizing results that score highly in both systems

- Context Population: Insert the fused results into the prompt using reranking principles

This ensures both semantic relevance and lexical accuracy.

5. Applying Query Expansion with Summarize-Then-Retrieve

User queries often differ from how information is expressed in documents. Query expansion helps bridge this gap.

Use a lightweight LLM to generate alternative search queries.

This improves performance on inferential and loosely phrased queries.

Conclusion

The emergence of million-token context windows does not eliminate the need for retrieval-augmented generation—it reshapes it. While long contexts reduce the need for aggressive chunking, they introduce challenges related to attention distribution and cost.

By applying reranking, context caching, metadata filtering, hybrid retrieval, and query expansion, you can build systems that are both scalable and precise. The goal is not simply to provide more context, but to ensure the model consistently focuses on the most relevant information.